A decentralized AI experiment once confined to cryptocurrency circles has received a nod from Nvidia CEO Jensen Huang, suggesting that decentralized model training may be inching closer to the mainstream.

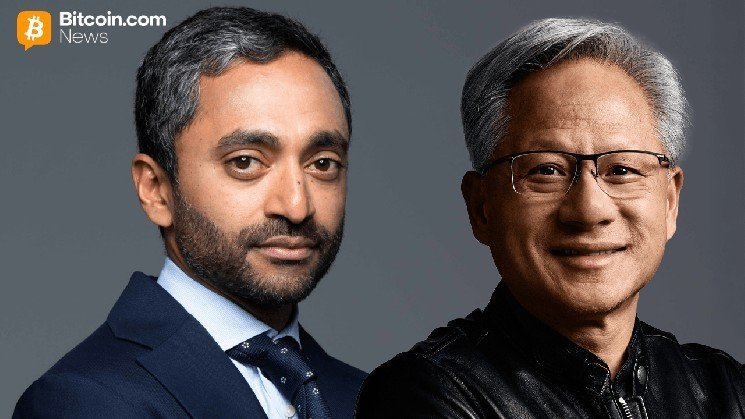

Chamath Palihapitiya spotlighted Bittensor’s Covenant-72B on an episode of the All-In Podcast as a concrete example of distributed artificial intelligence (AI) that goes beyond theory. bitensor operates as a decentralized, blockchain-powered network that establishes a peer-to-peer marketplace where machine learning models and AI computing are exchanged and incentivized.

Palihapitiya explained the initiative in easy-to-understand terms. A large-scale language model (LLM) that was trained without a centralized infrastructure, but instead powered by a network of independent contributors. “They were able to successfully train a fully distributed 4-billion-parameter LLaMA model with a lot of people contributing extra computation,” he said, calling it “a pretty crazy technical achievement.”

This comparison was made with a well-known analogy. “There are random people, and each person gets a little bit of a share,” Palihapitiya added, referring to early distributed computing projects that took advantage of idle hardware around the world.

Mr. Huang did not deny this idea. Instead, he leaned into the broader framework of the AI market and suggested that decentralized and proprietary approaches are not mutually exclusive. “These two are not A or B, they are A and B,” Huang said. “There’s no question about that.”

This dual trajectory vision reflects the growing rifts and overlaps within AI. On the one hand, there are highly sophisticated closed systems such as ChatGPT, Claude, and Gemini. The other is an open-weight distributed model that allows developers and organizations to customize the system to suit their specific needs.

Huang made it clear that he believes both tracks are essential. “The model is the technology, not the product,” he said, noting that most users will continue to rely on sophisticated generic systems rather than building their own systems from scratch.

At the same time, he pointed to industries where customization is not an option. “All these industries need to gain domain expertise in a controlled way,” Huang explained, adding, “That can only come from an open model.”

This statement falls squarely in Bittensall’s wheelhouse. Developed through Subnet 3 (Templar), Covenant-72B is one of the largest distributed training runs ever, coordinating over 70 participants across standard internet connections without a central authority.

Technically, this model pushes the boundaries. Built with 72 billion parameters and trained with approximately 1.1 trillion tokens, it leverages innovations such as compressed communication protocols and distributed data parallelism to enable training to occur outside of traditional data centers.

Performance metrics suggest this is more than just an experiment. Benchmark results show it competing with established centralized models, and this detail helps explain why this project is gaining traction beyond a crypto-native audience.

The market also took notice. After the announcement, the project’s token TAO rose 24% after a video of Palihapitiya and fans went viral on social media.

Still, Hwang’s comments suggest that the real story is not chaos but coexistence of the two. Proprietary AI systems will continue to be mainstream for everyday users, but open and decentralized models will have a role in specialized, cost-sensitive, or sovereignty-driven applications.

Nvidia’s CEO outlined a practical strategy for startups to start open and build on unique advantages. “All the startups we invest in now are open source first and then move to a proprietary model,” he said.

In other words, the future of AI may not belong to a single architecture or philosophy. It may belong to someone who can navigate both and knows when to use each.

Frequently asked questions 🔎

- What is Bittensor Covenant-72B?

The 72 billion parameter language model was trained through a decentralized network of contributors without any centralized infrastructure. - What does Jensen Huang say about decentralized AI?

He said that open AI models and proprietary AI models will coexist, and that the relationship is “A and B” and not a choice between the two. - Why is this development important?

This shows that large-scale AI models can be trained outside of traditional data centers, challenging assumptions about infrastructure needs. - How will this impact the AI industry?

This supports a hybrid future where centralized platforms and decentralized models play different roles across industries.